Simulated Transfer Interface Kit (STIK): Physical Data Theft Mechanics

Game design logic for handling immersive, physics-based data transfer, interruption penalties, and physical item states.

Title: Professional concept and systems design work for GRX Immersive Labs

Category: Immersive learning, AI literacy, didactic storytelling, and interactive systems design

This project began as an immersive-learning and AI-literacy experience adapted from the Points of View short-film material to fit GRX Immersive Labs' broader stack: teaching players about surveillance bias, AI systems, public engagement, and entry-level machine-learning concepts through story and interaction. The action-heavy drone and stealth branch documented later on this page was a stakeholder-driven pivot, not the original center of the concept.

The full internal technical doc had 43 Unity inspector and pipeline screenshots. The subset below is hosted under /images/data-division/pov-doc/. Omitted on purpose: pure Unity Player Settings / Tags & Layers / Physics Matrix frames (image24, image26–image28) and redundant manager shots (image42 TimeManager, image43 CameraStateManager). Activity-type stills (time trials, target range, elimination) are inlined on Game Modes.

Points of View was initially framed as an immersive action-adventure / detective VR experience in which the player would learn AI and machine-learning concepts through play. This game branch was adapted from the earlier short-film material rather than invented in a vacuum. The intended fantasy was not simply "control a cool drone." It was to join a research program, build a buddy AI-drone, and learn responsible AI usage inside a lived-in cyberpunk setting shaped by surveillance, bias, and public-systems tension.

The original pillars were Immersive, Learning, Cyberpunk, and Gritty. The strongest unique point was the combination of gamified AI/ML learning with a bond-driven human-computer interaction loop: the player teaches the drone, and the drone teaches the player. That meant the educational layer was supposed to be embedded into the game itself through missions, modules, tablet interactions, data cleaning, fine-tuning, and visible system behavior.

Mechanically, the player experience was supposed to move between workshop, apartment, training spaces, and the city itself. The player would create and modify the drone, interface with it through a diegetic tablet, diagnose corrupted datasets, retrain subsystems, and gradually learn how different AI components behave. The educational intention was direct: make machine-learning ideas physically graspable through a cyberpunk lived experience rather than treat them as detached courseware.

The project later bent harder toward action because stakeholder attention kept returning to immediate loops, spectacle, and drone-centered activity. That is where more of the run-based stealth, extraction, and direct-action structure came from. In other words, the later prototype branch did not replace the initial concept so much as displace it. The AI-drone stopped being primarily a teaching vector and started carrying more of the action fantasy burden.

That shift also increased scope materially. It pushed development toward shopping for and integrating additional Unity assets, including FPS-template-adjacent support, to satisfy the demand for stronger action loops. In practice, that meant engineering time was pulled away from the core data-collection prototype and into extra combat/action scaffolding that was not the original center of the project.

That distinction matters for portfolio purposes. The linked system documents below are real design work, but many of them reflect the later prototype branch rather than the original AI-literacy-first center. In blunt terms, the action-loop push expanded scope faster than the project could absorb, and that expansion was one of the things that helped kill it.

The useful way to read this work is as a real prototype branch and delivery package from the project before it was sunset in June 2024. It is not unresolved in the sense of still-active production. It is closed professional work from a project that was not carried forward.

The implemented branch was run-based. Each run, the player was assigned a randomized profession that constrained what items and behaviors were plausible in the neighborhood hub.

The original paid scope was narrower than the eventual prototype work shown here. At its simplest, the contracted role was preproduction and conceptual design support rather than full implementation ownership.

In practice, the prototype and systems work later documented on this page exceeded the formal scope rather than arising from a contract revision that cleanly expanded the role.

Beyond the playable and architectural work, the delivery package also included pitch-facing material: a pitch deck starter, a pitch document, an email pitch, and a SOT analysis. That pitch package was adapted from the short-film source material and translated into a game-production and funding frame.

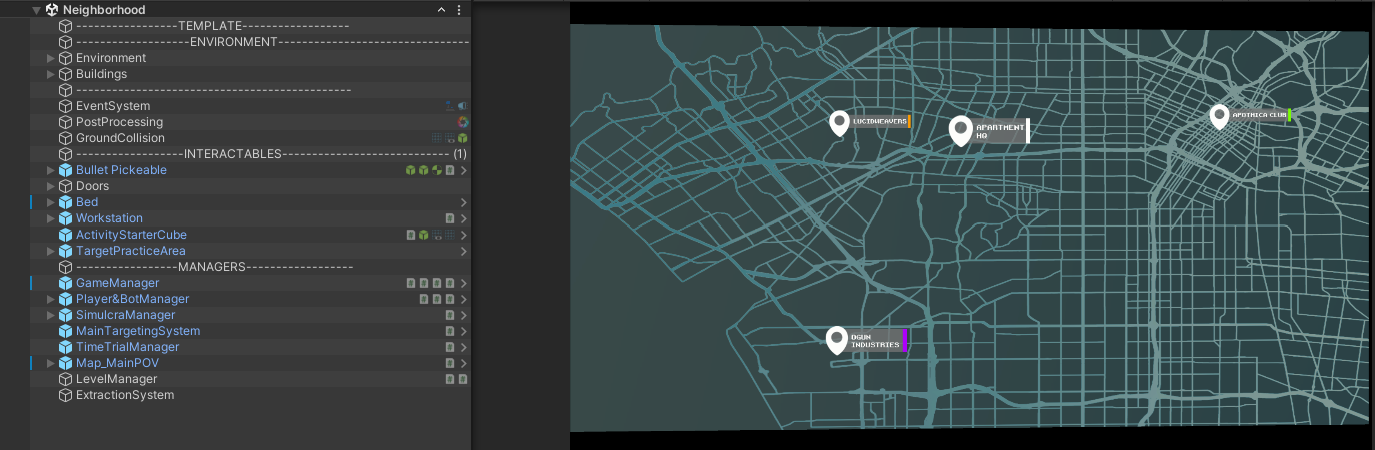

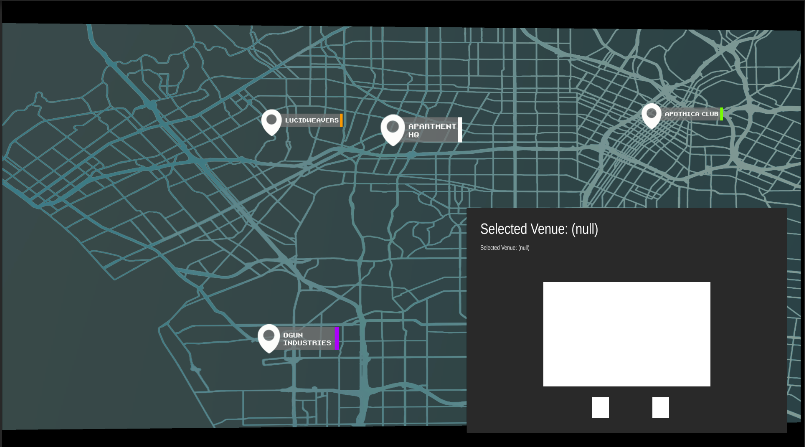

Authorship-wise, the game-engine implementation, systems design, and prototype-side production work documented here were mine, including work completed with AI assistance. This was not a real team structure. It was mostly solo work: I carried the detailed design, systems, and production substance, and one other person then helped translate that material into stronger pitch-facing mockups and deck presentation outside the engine. A good example is the map: the mockup direction was prepared outside the engine, but the in-engine venue selection, selected-venue display, and runtime integration were mine.

One proposed system that never reached implementation was an Onyx ML-driven parole officer NPC that would periodically visit the player's neighborhood hub to perform a pass/fail inspection. The officer would check whether the player's possessions and behavior were consistent with their randomized profession. Having a stockpile of items that did not fit either profession would trigger suspicion. This was designed as a persistent tension layer for managing the player's visible footprint between runs.

The core prototype was the data-collection loop itself: downloading, carrying, routing, transitioning between spaces, and feeding the workstation/economy path. That part was the center and it was working. The harder extended-scope problem was persistent object state across runs. That would have taken the project further, but it was not required to make the prototype viable; the backend could have been scoped down into a more data-driven or spreadsheet-like path built off larger ScriptableObject-driven state if the project had continued.

This project is documented as a series of discrete system pages. For the full collection of design architecture, visit the Game Design index.

Game design logic for handling immersive, physics-based data transfer, interruption penalties, and physical item states.

Game design logic for handling immersive deployment and extraction timers within Data Division's mission structure.

Game design logic for handling in-game economies, vendor markups, and physical delivery mechanics.

A shared diegetic UI controller for contextual HUD transitions across Project Longbow and Data Division.

Game design logic for handling in-world activities such as target practice, time trials, and elimination-mode bot fighting in Data Division.

Professional game design documentation for pixelshifted objects, drone-only visibility, and cross-reality collectable logic in Data Division.

Professional game design documentation for the modular item architecture, Flashdrive utility items, and trader keyword logic in Data Division.

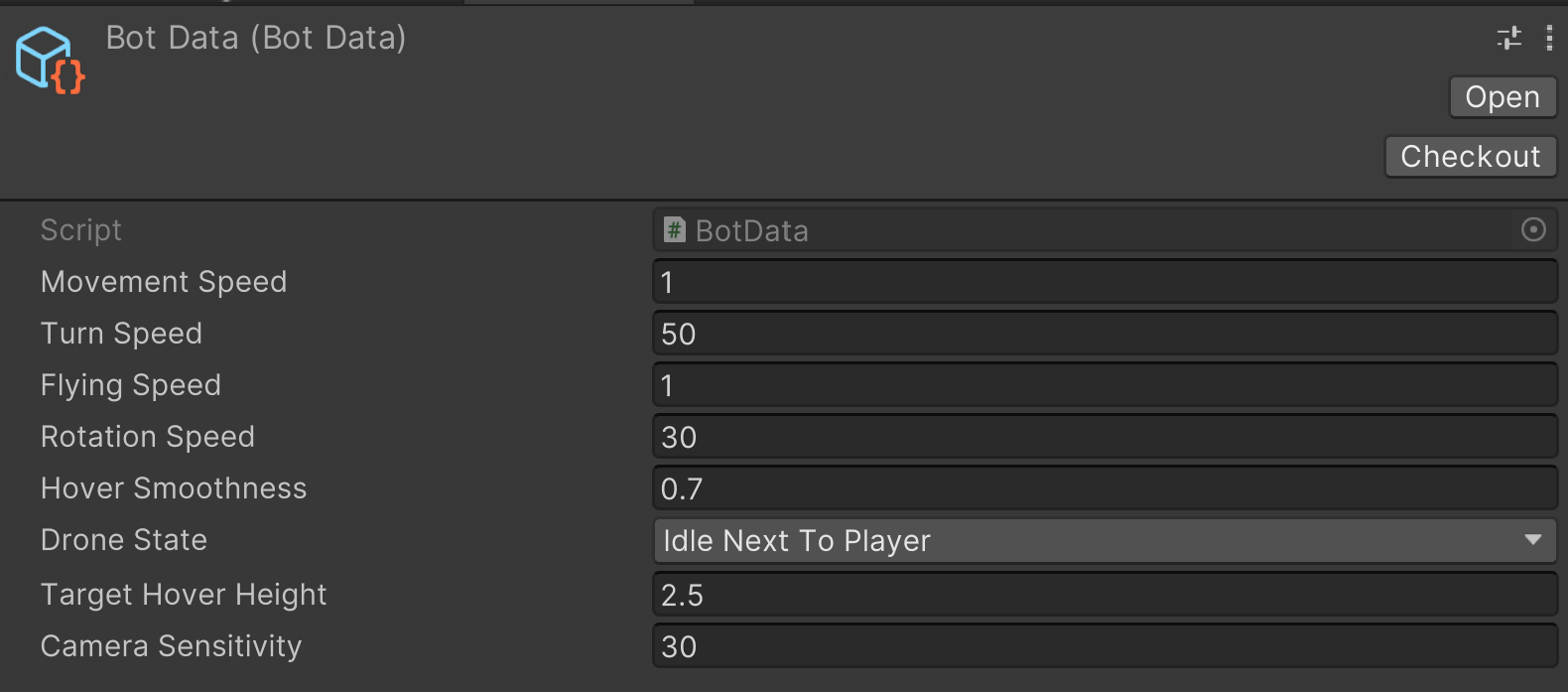

Professional game design documentation for BotManager, BotData, Drone integration, and the custom logic layered over a third-party controller in Data Division.

Game design logic for handling in-world diegetic tablets and UI state management.

Professional game design documentation for PlayerData and the dependent systems stack that supported Data Division.

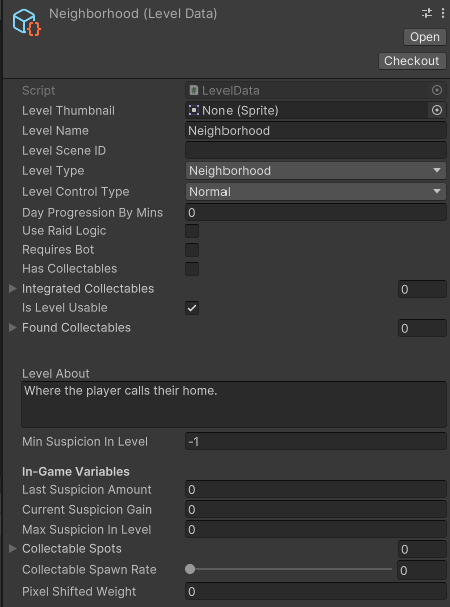

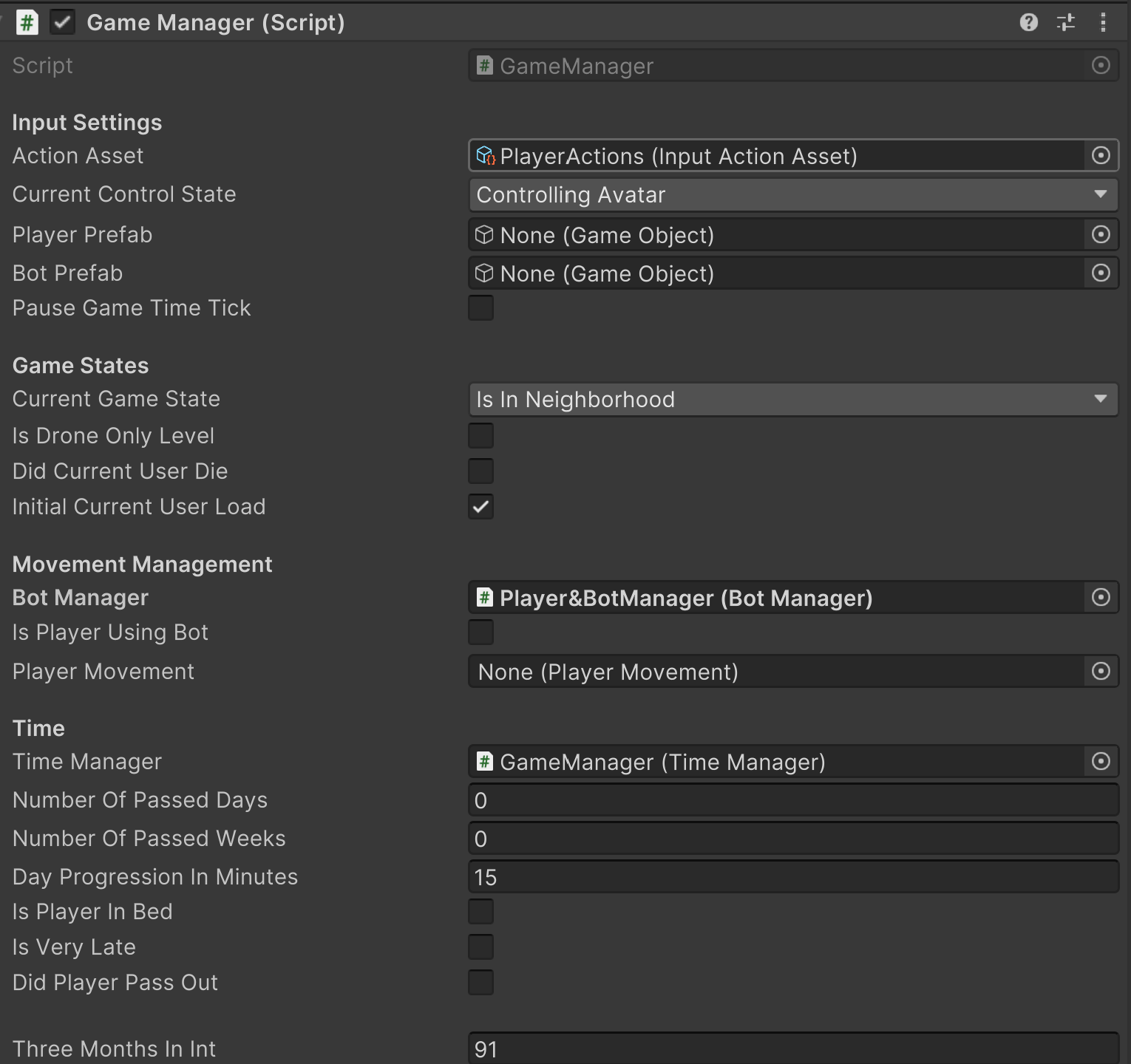

Professional game design documentation for LevelManager, LevelData, scene initialization stages, and map-driven venue selection in Data Division.

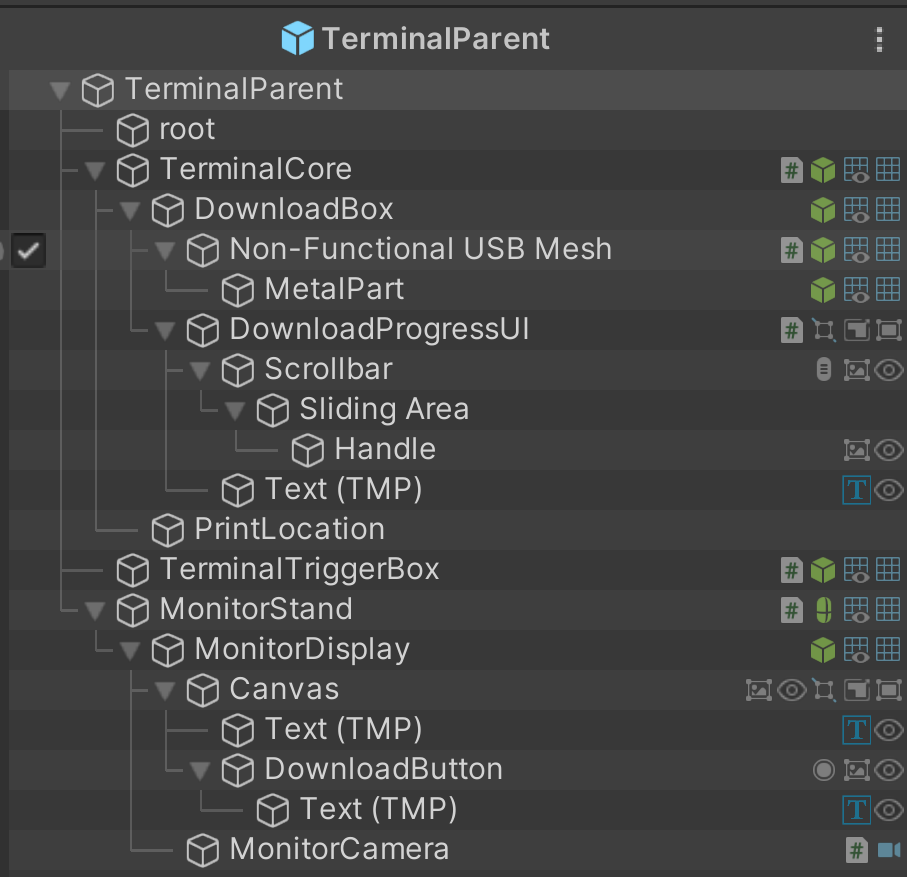

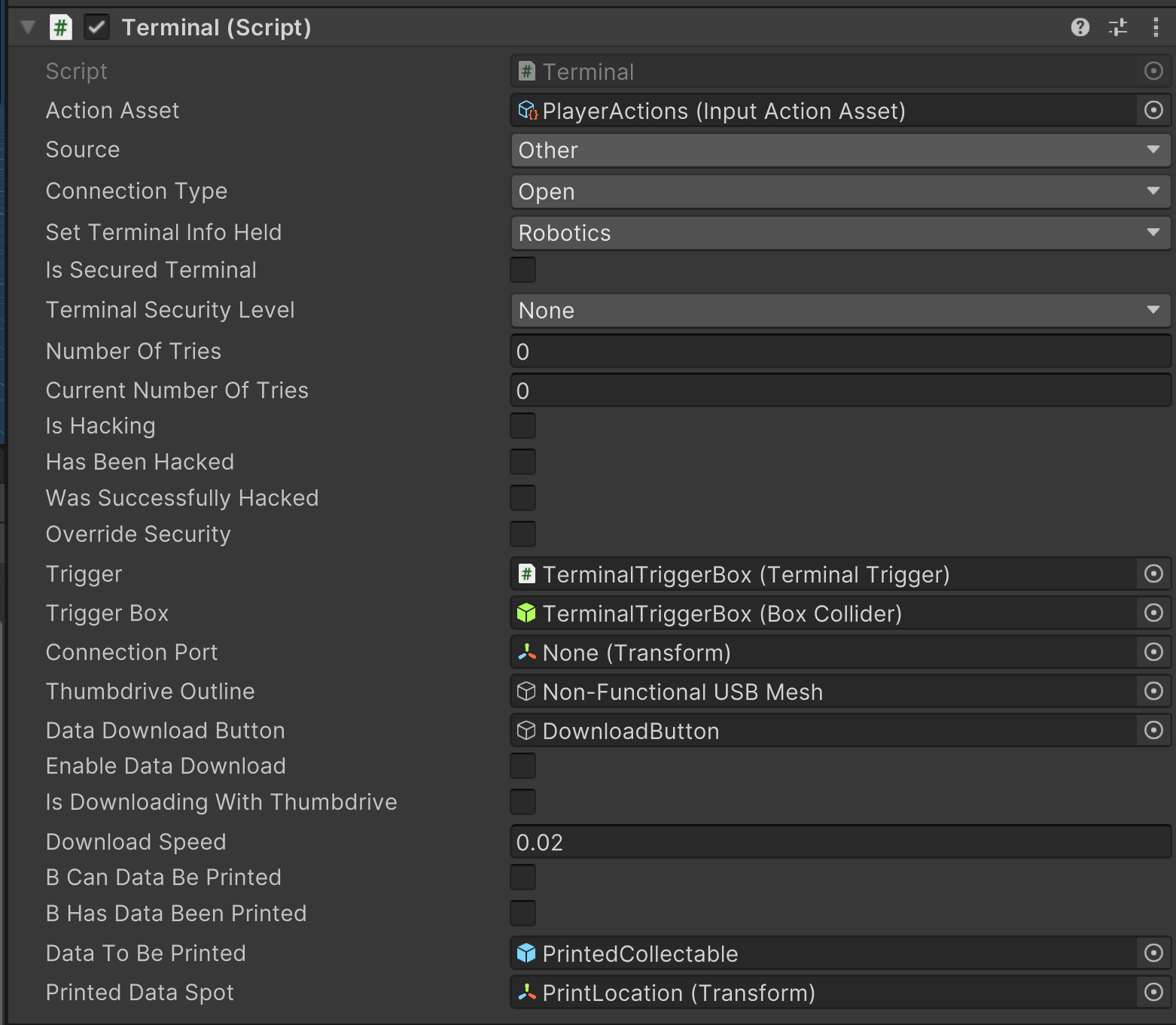

Professional game design documentation for terminal access, thumb-drive downloads, printing, and diegetic device connections in Data Division.

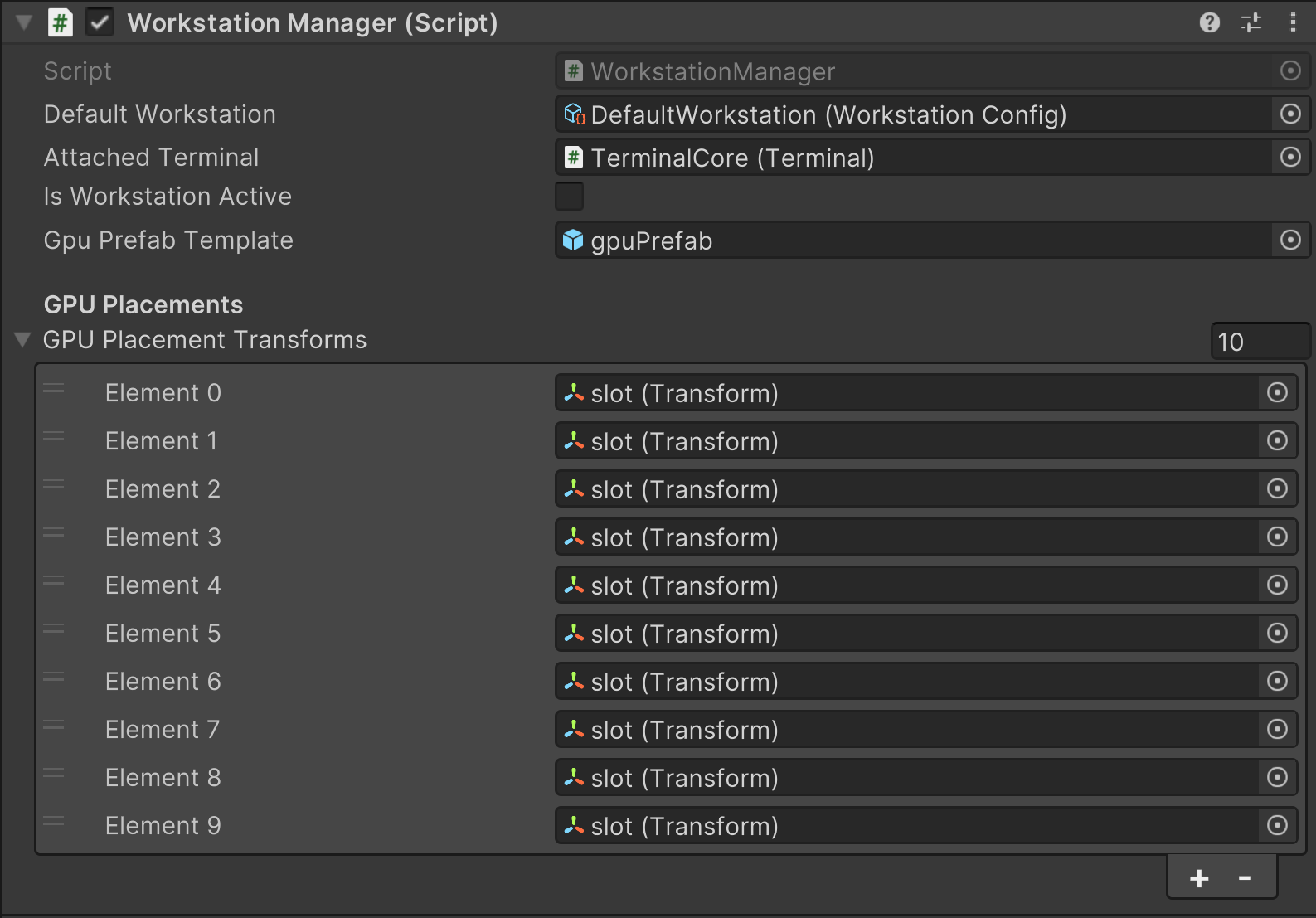

Professional game design documentation for the workstation, GPUs, flashdrives, databases, and data synthesis loop in Data Division.

Game design logic for handling immersive player inventories, heavy items, and weapon controller integration.