Bot and Drone System

Category: Companion Systems, Movement Architecture, and Asset Integration

Context: This page documents the bot/drone layer in Data Division. In the original notes, bot and drone were used interchangeably. Bot was the agnostic systems label; drone was the actual current implementation.

Terminology

Inside the project, Bot and Drone mean the same thing.

That naming choice was intentional. Bot was the broader term in case the project later pivoted into a different machine-companion format. In the implemented branch, the active companion was a drone.

What Was Custom vs. What Was Integrated

This is the main portfolio point:

- the top-level movement stack was bridged from a third-party asset

- the companion-management layer and some behavioral logic were mine

The integrated asset side came from Drone Controller Pro and included:

DroneMovementPropellerMovementSparkCollisionDetectionAudioController

What I created and layered on top before and during that integration included:

DroneBotFollowFireMechanismDroneFlightManagementBotDataBotManager

So this was not “I built an entire drone controller from zero.” It was more specific and more useful than that: I bridged a purchased controller into the project architecture, added an override path, and built the project-specific management layer around it.

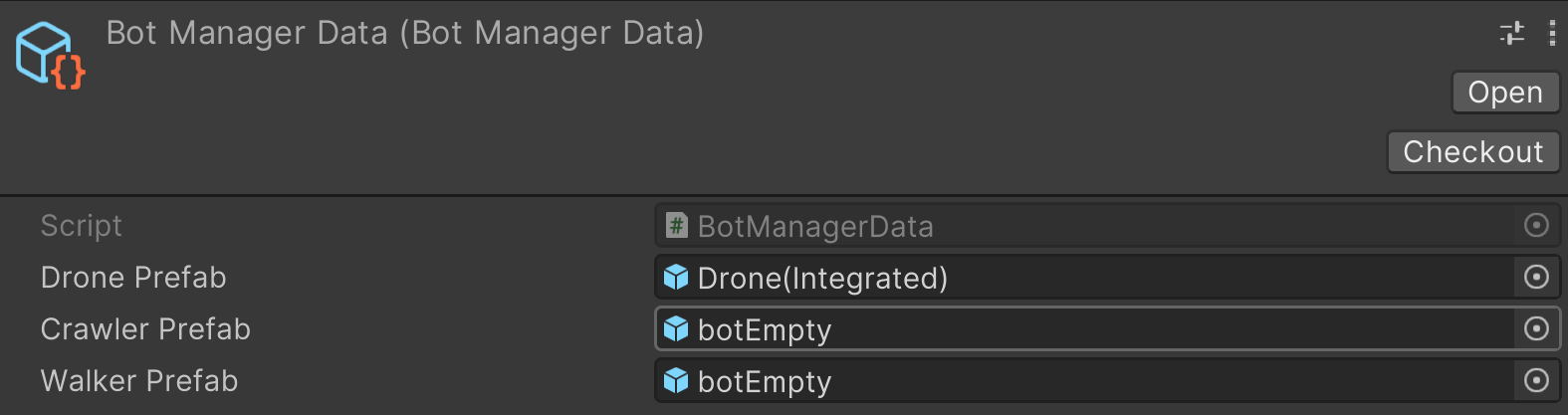

BotManagerData

The management side began with BotManagerData.

This asset defines what bot prefabs are available to the manager:

Drone PrefabCrawler PrefabWalker Prefab

Even though only the flyer drone reached a functional state, the architecture was already prepared for a broader bot family.

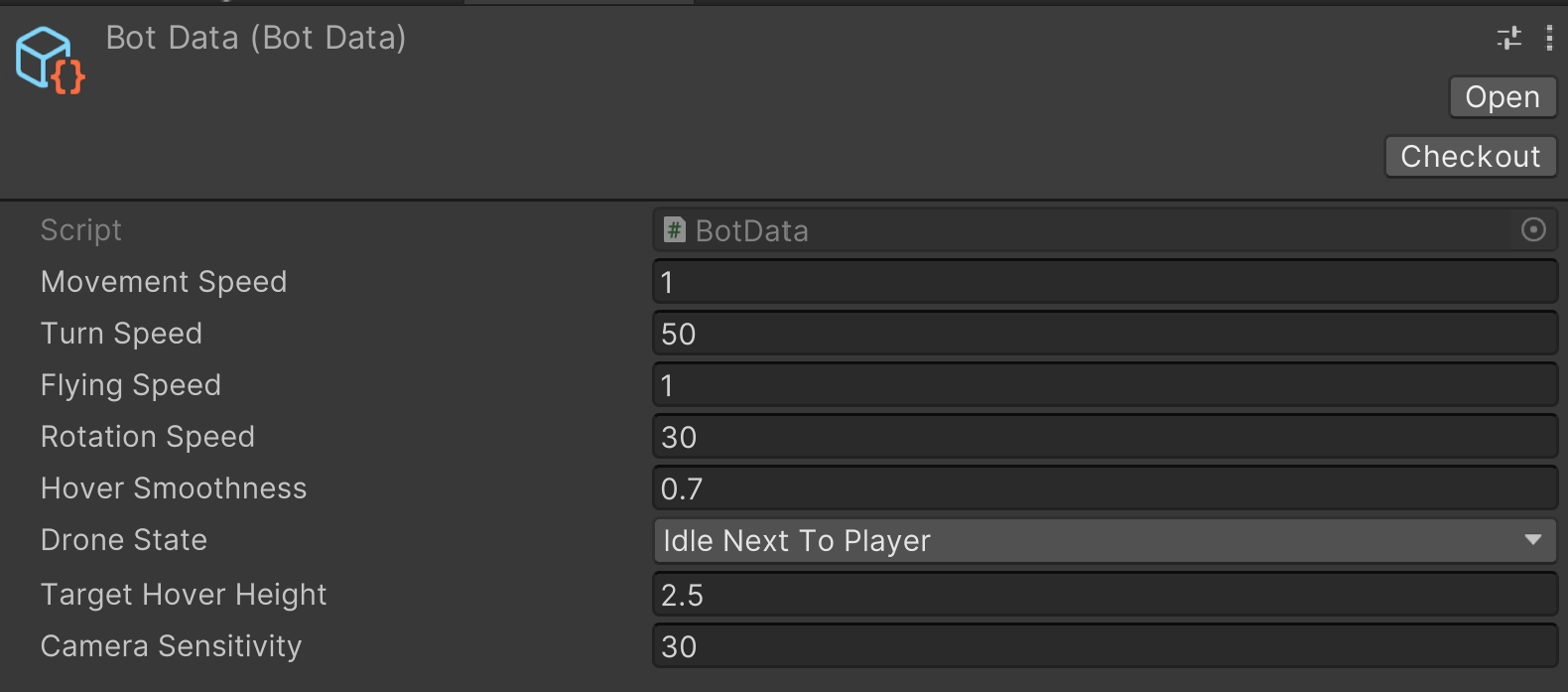

BotData

At the instance-behavior level, the companion uses BotData.

This stores values such as:

- movement speed

- turn speed

- flying speed

- rotation speed

- hover smoothness

- current drone state

- target hover height

- camera sensitivity

That is the useful split:

BotManagerDatadecides what bot types existBotDatadecides how an active bot behaves

The Integrated Drone Prefab

The actual drone prefab used in the project was the integrated drone from the asset stack, partly because the originally intended drone model had unresolved LOD issues.

That is worth saying plainly in a portfolio context. The point is not pretending the art/prefab source was all custom. The point is how the system was made production-usable.

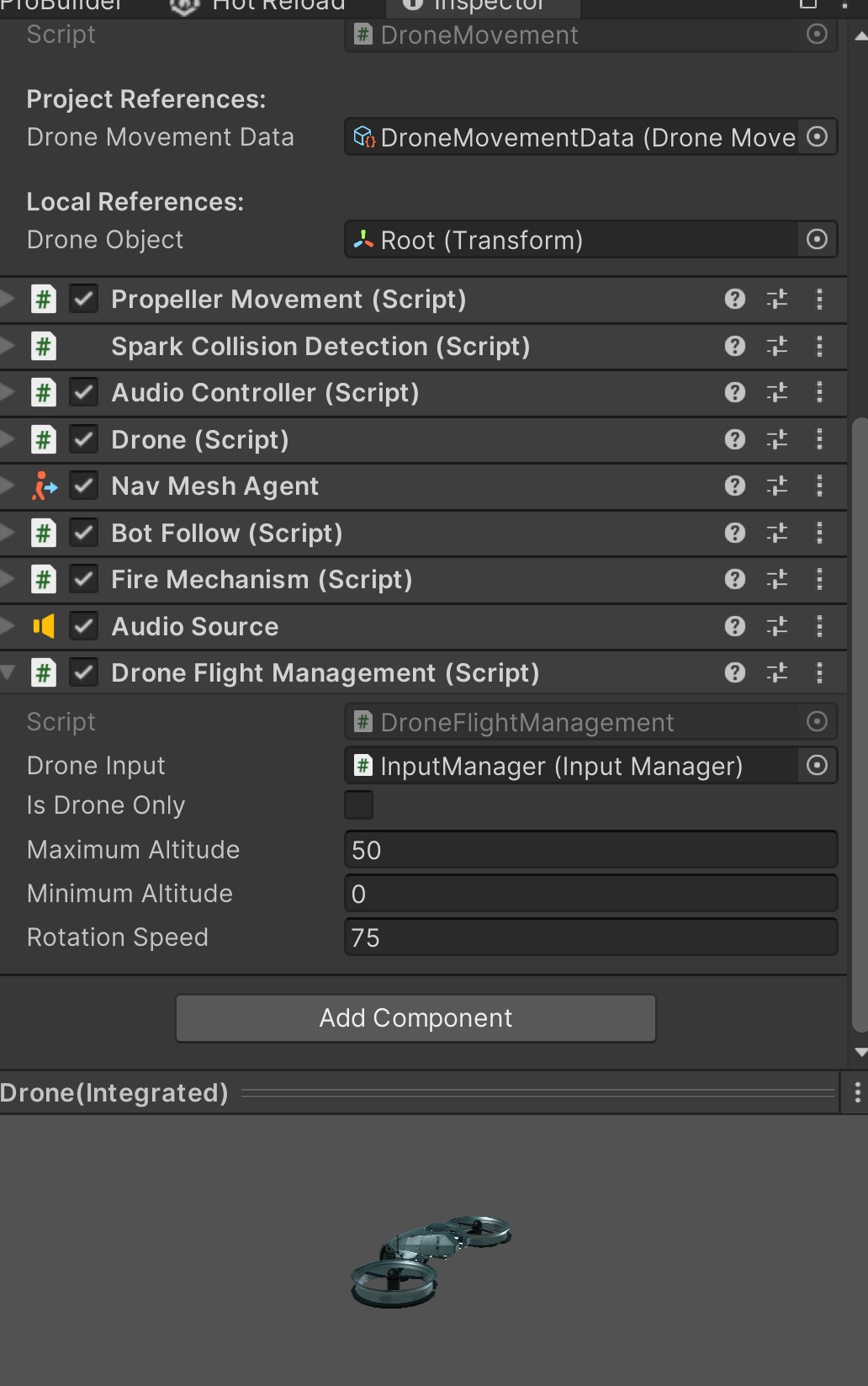

Component Stack

The integrated object carried both asset-side and custom-side components.

Visible stack highlights:

DroneMovementPropeller MovementSpark Collision DetectionAudio ControllerDroneNav Mesh AgentBot FollowFire MechanismDrone Flight Management

This is exactly why the system is portfolio-worthy: it shows practical integration work, not just theoretical design.

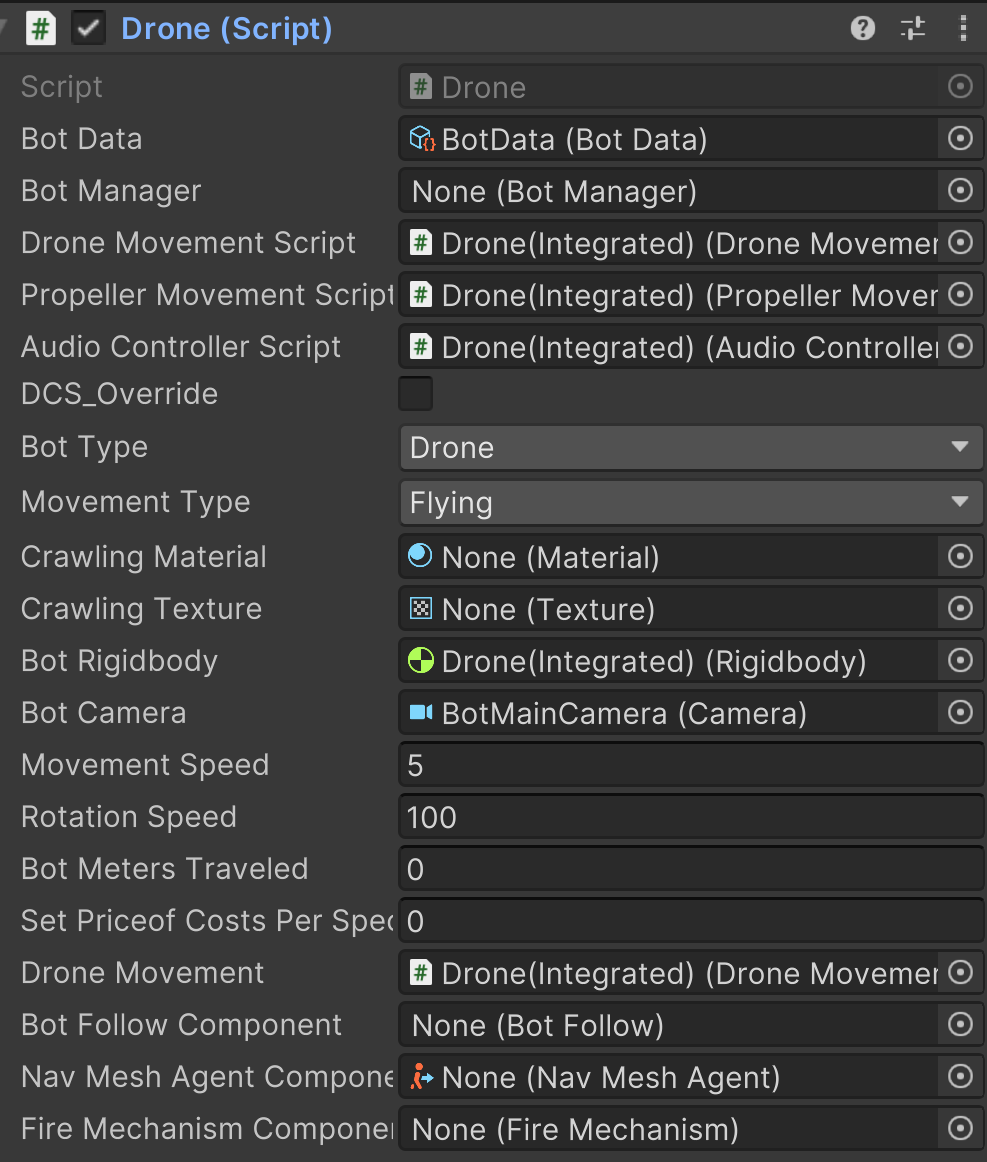

The Custom Drone Script

The custom Drone script is where the project-specific bridge becomes legible.

That layer references:

BotDataBotManager- the integrated movement/audio scripts

DCS_Override- bot type

- movement type

- rigidbody

- bot camera

- movement and rotation speed

That is the relevant engineering point. I did not just drop an asset into the project and call it done. I wrapped it into the rest of the game’s architecture.

Follow vs. Direct Control

BotFollow handled the drone following the player through a NavMeshAgent when the player was not actively controlling it.

When the player switched into direct drone control, the movement stack changed over to the active drone-control layer instead.

That split mattered because it let the same companion behave as:

- a follower

- a controlled scout

- a mission tool

instead of forcing one mode all the time.

graph TD

A[Bot Spawned or Assigned] --> B{Player Controlling Drone?}

B -- No --> C[BotFollow plus NavMeshAgent]

B -- Yes --> D[Direct Drone Control]

D --> E[DroneMovement Stack]

E --> F[Perception / Scouting / Mission Task]

C --> G[Idle Next To Player or Follow State]

G --> B

F --> B

Why This System Mattered

The drone was not just a gadget. It was one of the main identity pieces of the project.

At different points it was expected to support:

- companion fantasy

- surveillance fantasy

- stealth and scouting

- later AI-literacy framing

- possible alignment behavior shaped by data routing

So even though the raw movement controller was integrated from an asset, the project-specific work around it was central:

- companion type management

- data-driven bot configuration

- follow/control state handling

- custom wrapper script

- connection into the rest of the player’s systems

That is why this deserves its own page in the Data Division portfolio rather than being buried inside one long technical export.